This post is a sample of the large scale and commercial work I have done as the lead creative technologist at Fake Love in NYC

Lexus – Trace Your Road – Life Sized Video Game – Rome, Italy – 2013

My role: I was one of the lead technical developers and designers for this piece, along with Dan Moore and Ekene Ijeoma. Programmed in openFrameworks on OSX and iOS.

Lexus | TRACE YOUR ROAD | Director’s Cut from Fake Love on Vimeo.

——————————–

AmEx Instagram Towers – Fashion Week – Lincoln Center, NYC – 2012

My role: Lead technical architect on the hardware and interaction, also programmed by Caitlin Morris Made with openFrameworks.

Amex Fashion Week Instagram Towers from Fake Love on Vimeo.

———————————

NY Pops Gala 2012 – Interactive Conductors Baton – Carnegie Hall, NYC – 2012

My role: I was the programmer and tech lead on this project. Devised the tracking system, custom baton, software and design. Made with openFrameworks and Max/MSP/Jitter

NY Pops | Gala 2012 from Fake Love on Vimeo.

———————————-

Google Project Re:Brief Coke – Interactive Vending Machine – Worldwide – 2011

My role: I was the lead tech for the installation/physical side of this project (another company did the banners and web server portion). I did the vending machine hacking, setup and programming in New York, Cape Town, Mountain View and Buenos Aires. This project went on to win the first Cannes Lions mobile award. Other programming and hardware hacking by Caitlin Morris, Chris Piuggi, and Brett Burton. Made with openFrameworks.

Project Re:Brief | Coke from Fake Love on Vimeo.

—————————-

Shen Wei Dance Arts – Undivided Divided – Park Avenue Armory, NYC – 2011

My role: Lead projection designer, programmer, and live show visualist. I designed the entire 12 projector system for this Shen Wei premiere at the Park Avenue Armory. I also programmed and maintained the playback system for the 5 night run of the show. Made with Max/MSP/Jitter and VDMX

Shen Wei | Park Avenue Armory from Fake Love on Vimeo.

——————————-

Shen Wei Dance Arts – Limited States – Premiere – 2011

My role: Lead projection designer, programmer and live show visualist. I designed the playback and technology system for this new piece by choreographer Shen Wei. I also contributed heavily to some of the visual effect programming seen in some of the pre-rendered clips. Made with Max/MSP and VDMX.

Shen Wei – Limited States from Fake Love on Vimeo.

——————————–

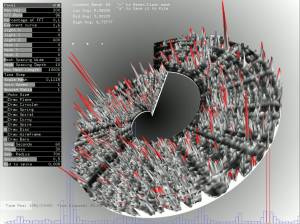

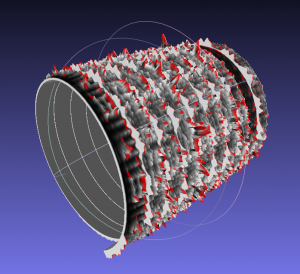

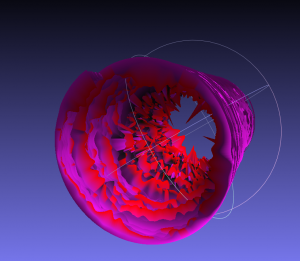

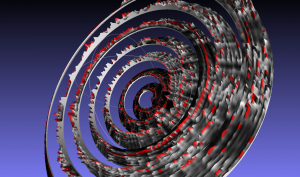

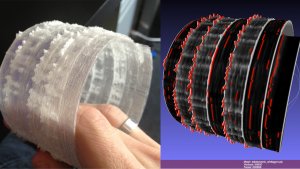

Sonos – Playground and Playground Deconstructed – SXSW and MOMI NYC – 2013

My role: I was the technical designer of the hardware and projections for this audio reactive immersive piece. Red Paper Heart was the lead designer and developer on this project which they made with Cinder.

PLAYGROUND DECONSTRUCTED from Fake Love on Vimeo.