Optical feedback is a classic visual effect that results when an image capture device (a camera) is pointed at a screen that is displaying the camera’s output. This can create an image that looks like cellular automata/reaction-diffusion or fractals and can also serve as a method of image degradation through recursion.

Many video artists have used this technique to create swirling patterns as a basis for abstract videos, installations and music videos. Feedback can also be created digitally by various means including continually reading and drawing textures in a frame buffer object (FBO) but the concept is essentially the same. In this post I’m writing up a thought experiment for a project that would create the biggest optical feedback loop in the world.

Sample of analog video feedback:

Optical Feedback Loop from Adam Lucas on Vimeo.

Sample of video feedback (digital rendering from software):

I really enjoy the various forms of this effect from immediate feedback loops to “slower” processes like image degradation. Years ago, I did a few projects involving video feedback and image degradation via transmission, and this thought experiment combines those two interests. Lately, I’ve also been obsessed with really unnecessarily excessive, Rube Goldberg-like uses of technology, and this fits that interest pretty well. It’s like playing a giant game of Telephone with video signals.

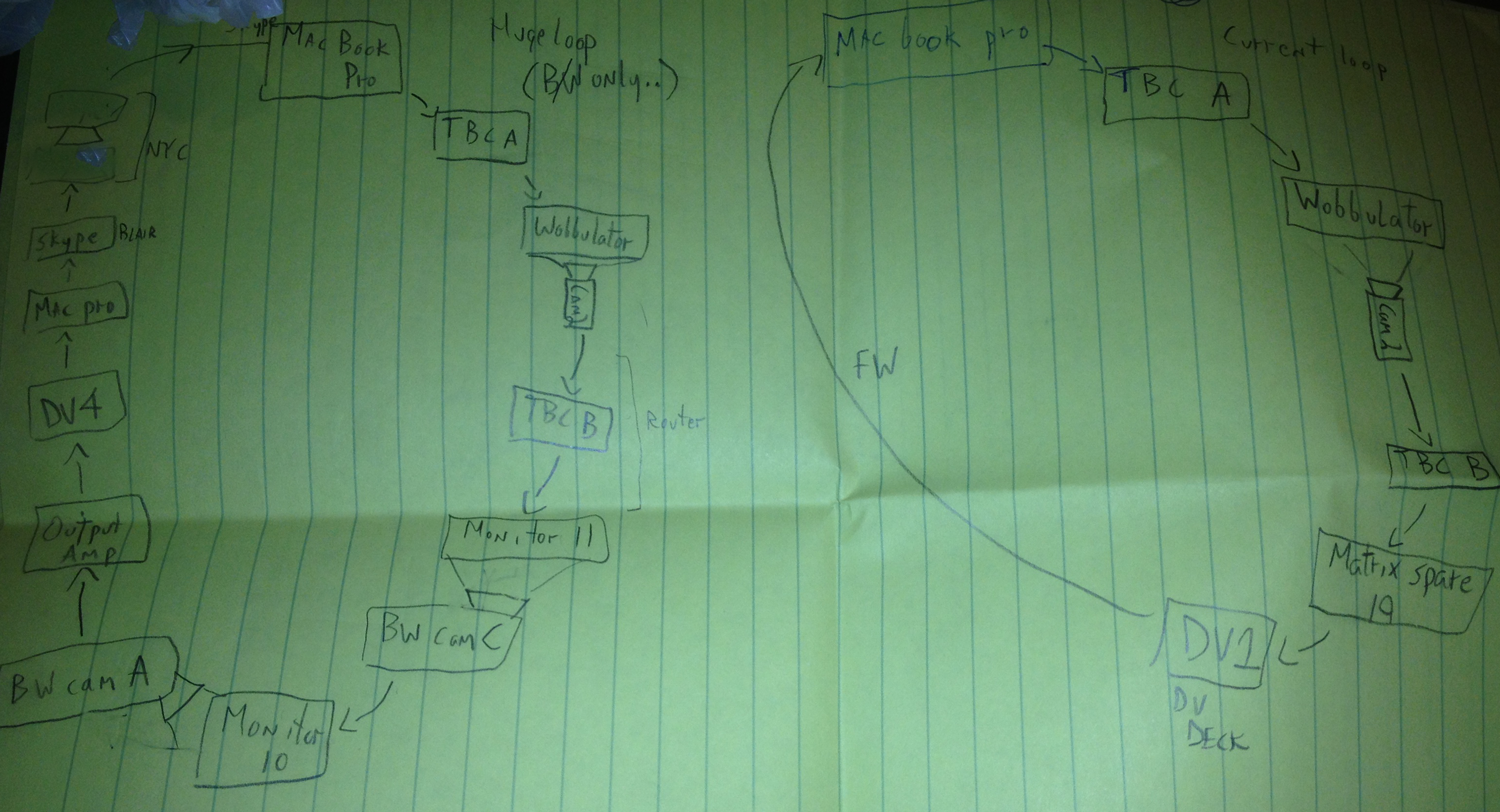

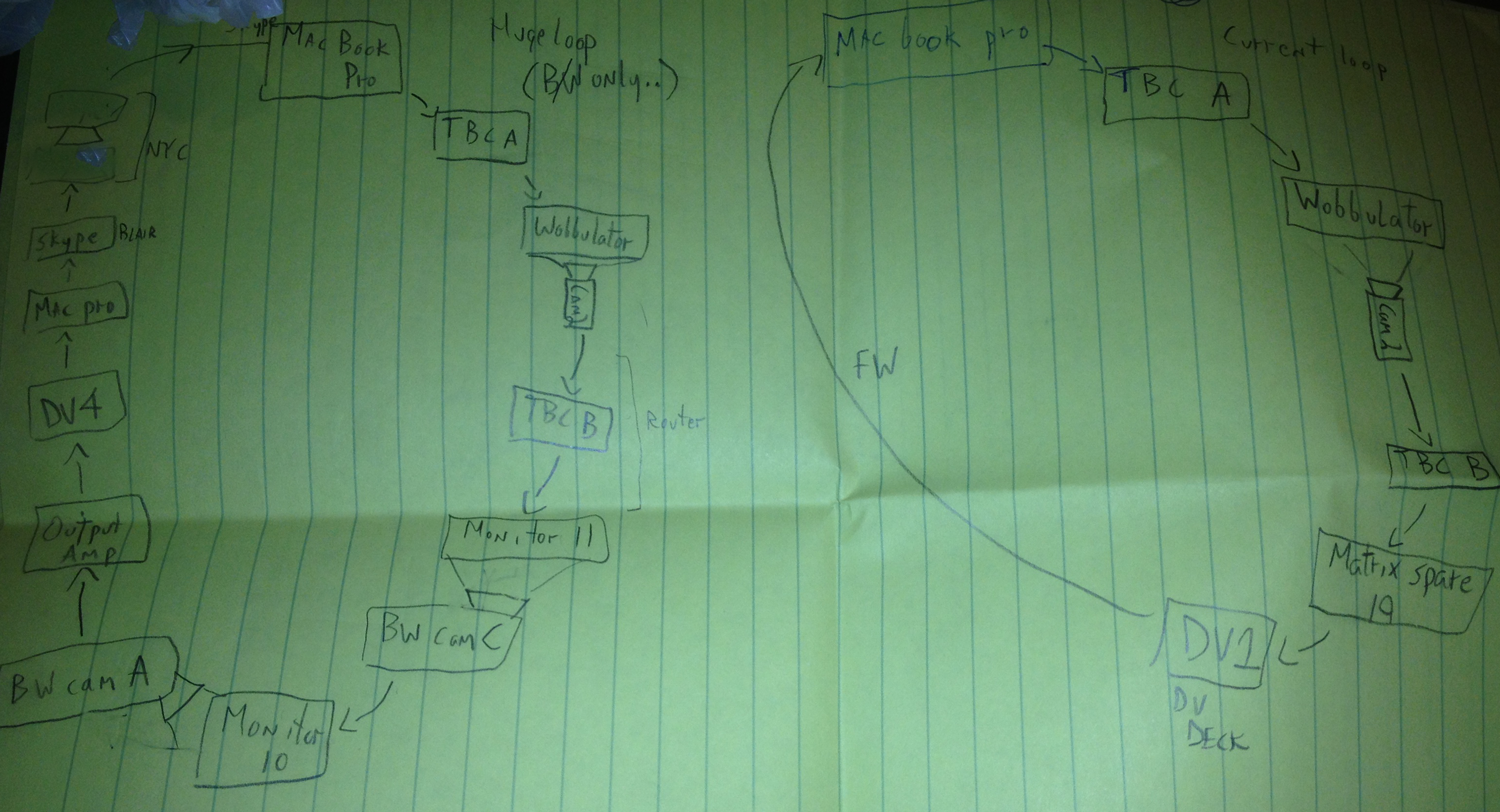

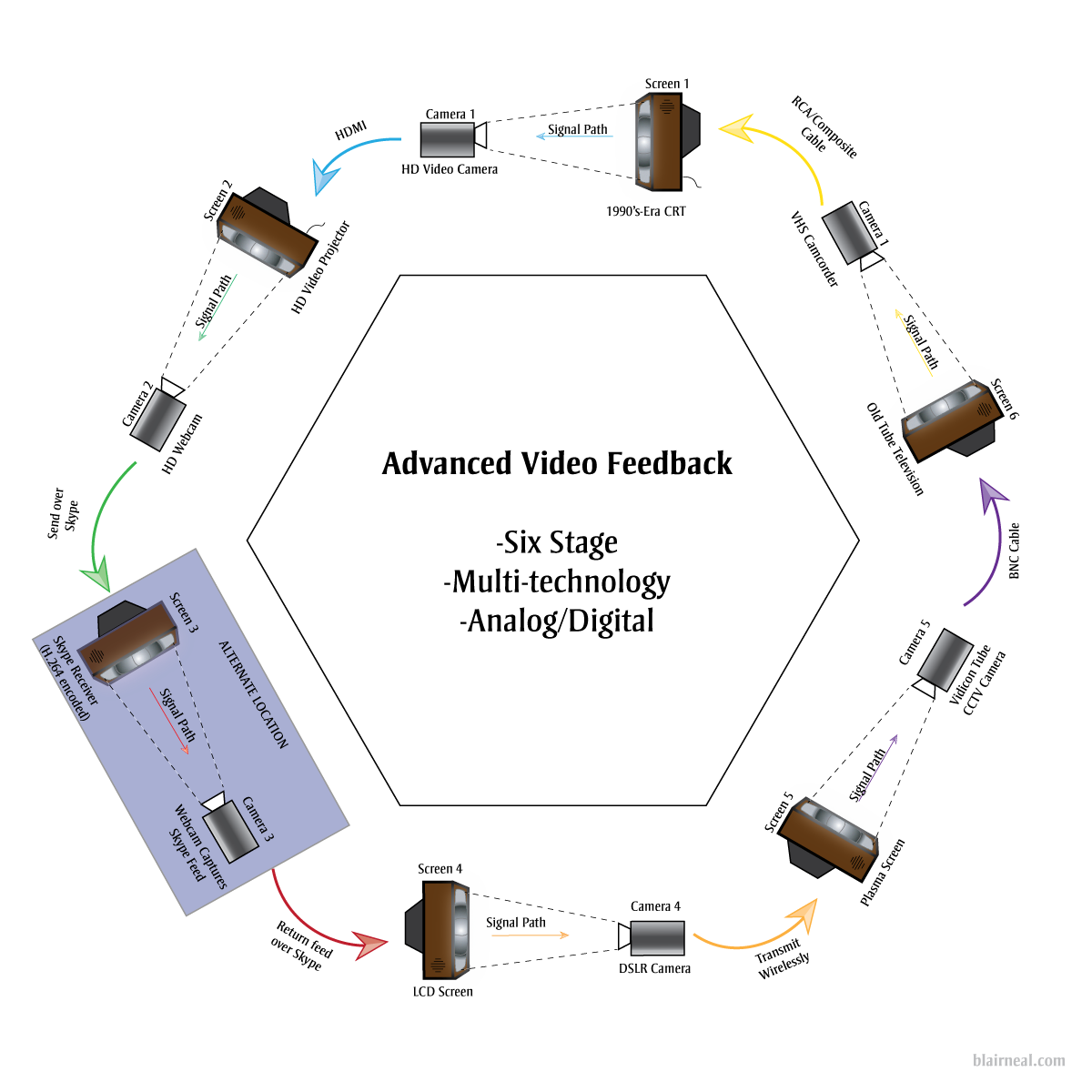

While in residency at the Experimental Television Center in 2010, I was surrounded by cameras, monitors and 64 channel video routers. After a few sessions with playing with feedback on the Wobbulator, I drew up a sketch for making a large video feedback loop using all of the possible equipment in the lab…and a Skype feed for good measure. Here is that original sketch:

The eventual output of a large feedback loop ended up not looking the best because the setup was a little hacky and ended up losing detail very quickly due to camera auto adjustments and screens being too bright. The actual time delay through the whole system including Skype was still just a few frames. There was also several decades between equipment and a break between color and black & white feeds at certain points. I’ve returned to the idea a few times and I’ve wanted to push it a little further.

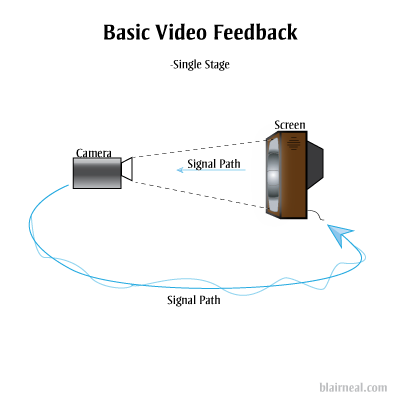

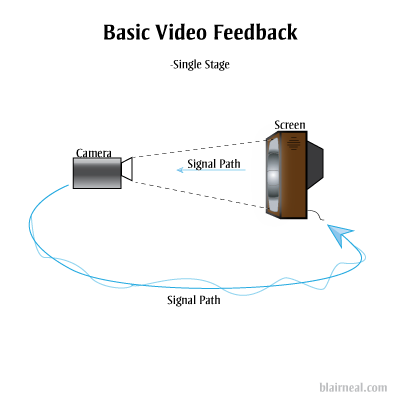

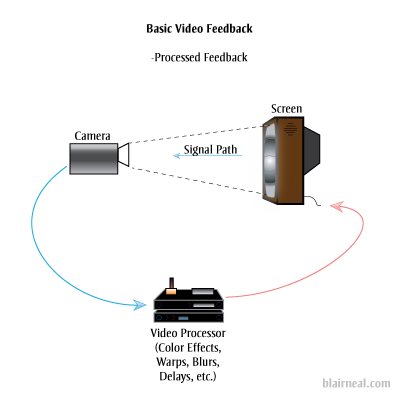

As a refresher, this is the most basic form of optical feedback, just a camera plugged into a screen that it is capturing.

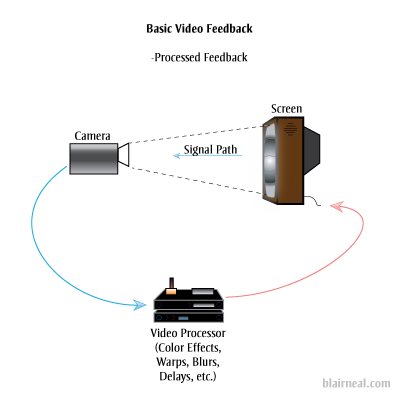

You can also add in additional processors into the chain that can effect the image quality (delays, blurs, color shifts, etc). Each of these effects will be amplified as they pass through the loop.

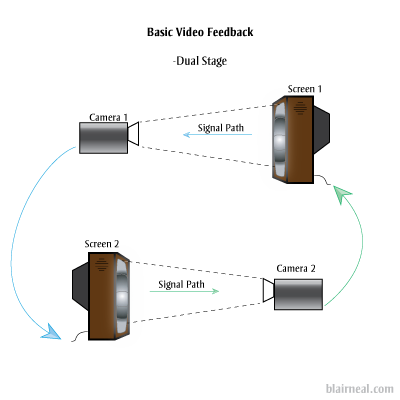

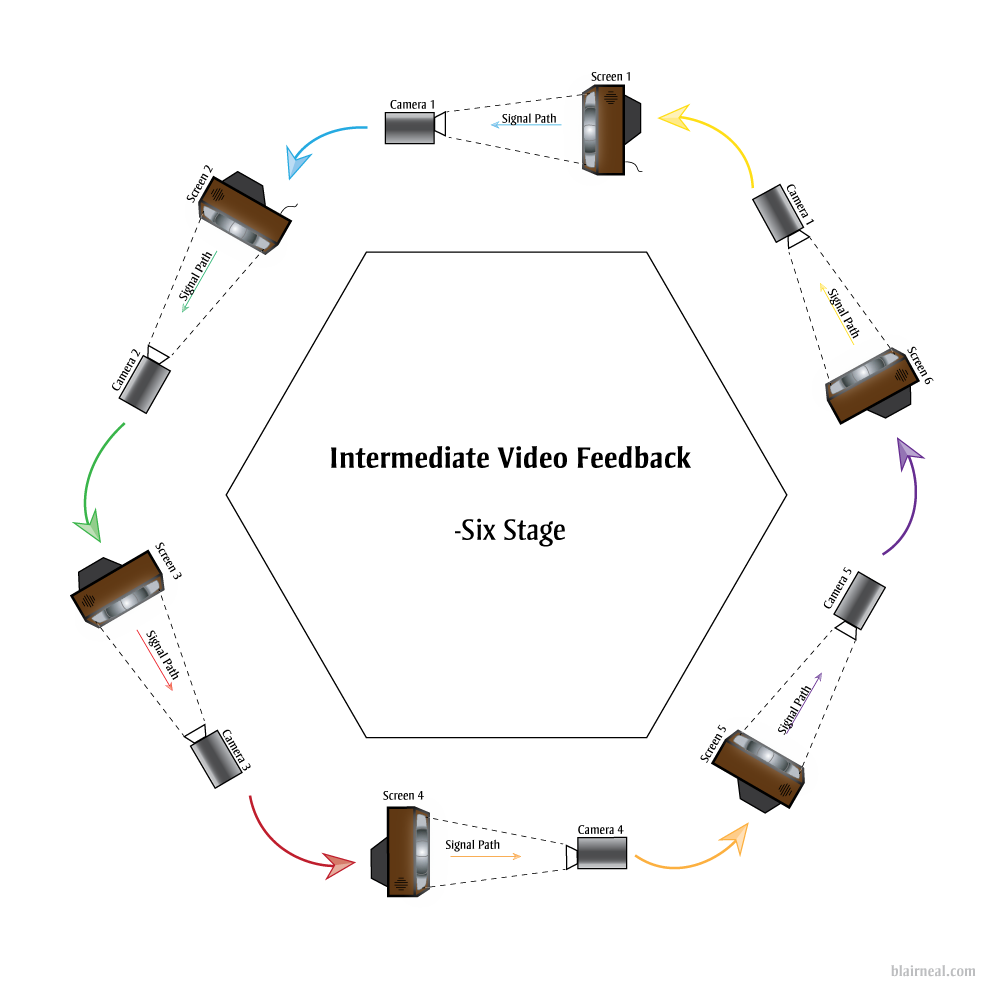

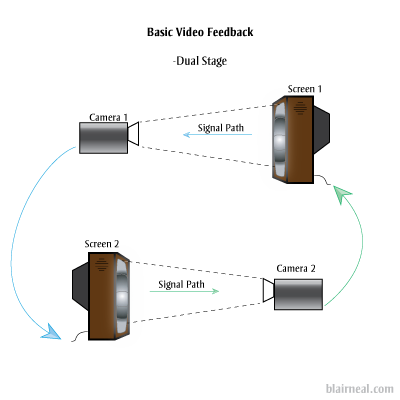

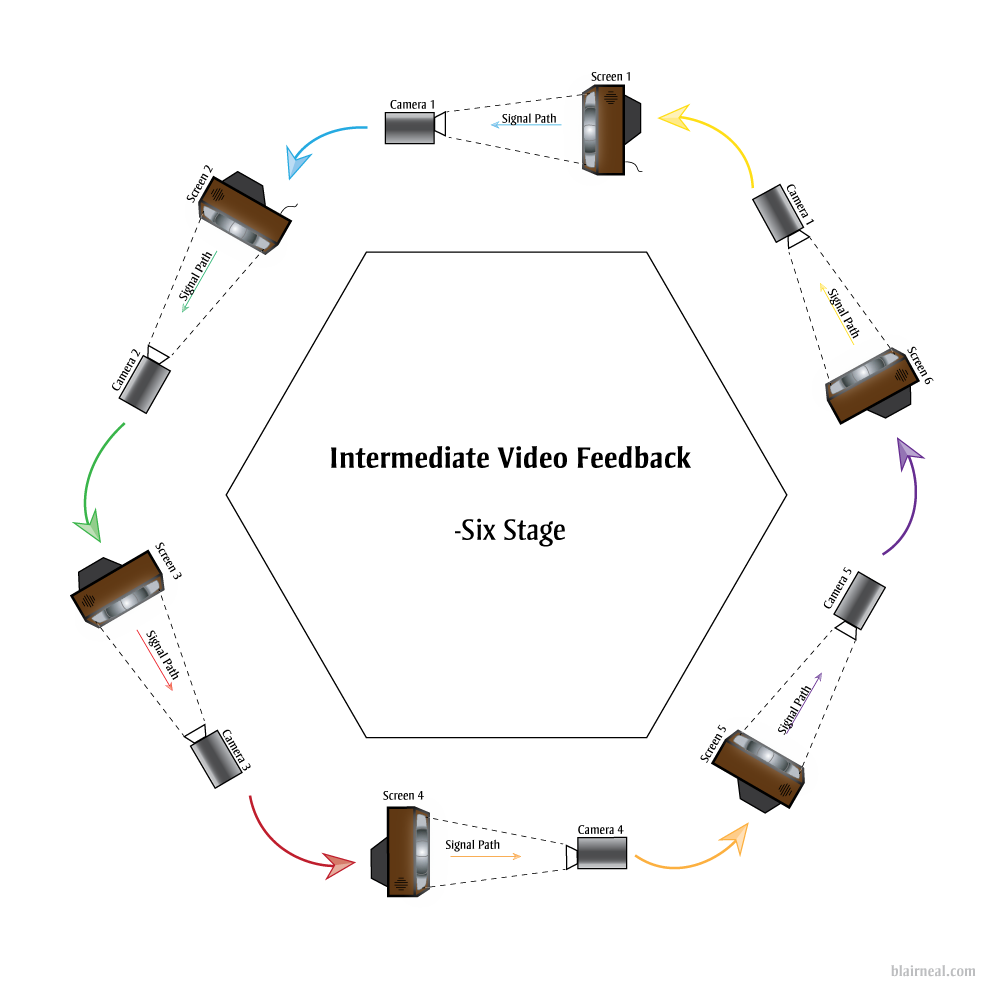

The above are the most common and straightforward techniques of optical feedback. They will generate most of the same feedback effects as the larger systems I’m proposing, generally with a shorter delay and less degradation. Doesn’t hurt to ask about what will happen if we add another stage to the feedback system:

We’ll lose a little more image quality now that the original photons have been passed through twice as many pieces of glass and electronics. Let’s keep passing those photons around through more stages. You could put a blinking LED in front of one of the screens and have it send it’s photons through all the subsequent screens as they transform digitally, and electrically. The LED’s light would arrive behind it in some warped, barely perceivable fashion but it would really just be a sort of ghost of the original photons.

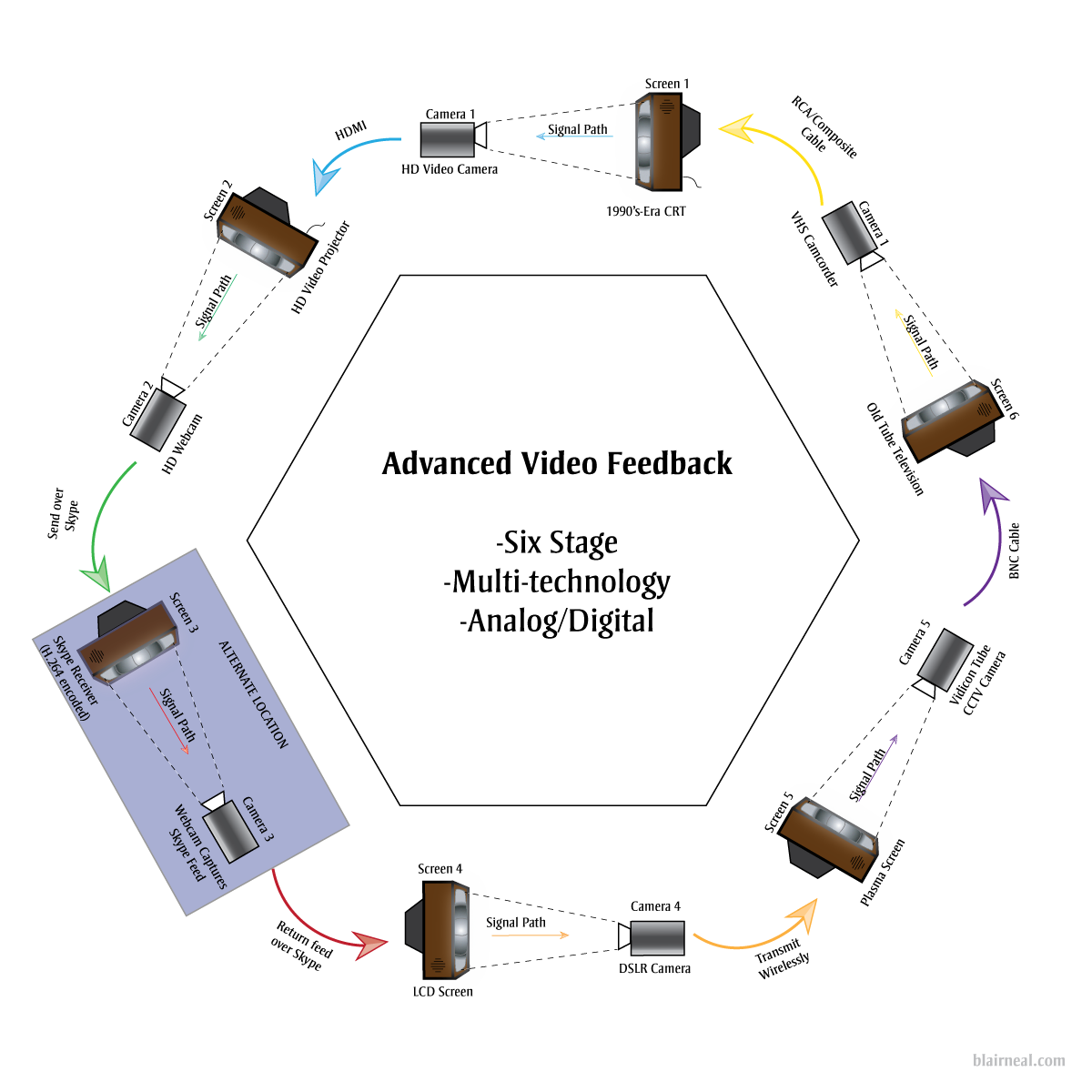

We can take the above example of a 6 stage video feedback loop and start working out what we might need to hit as many image and screen technologies as we can think of from the past 50 years. Video art’s Large Hadron Collider.

Click for detail

By hitting so many kinds of video processing methods we would get a video output that would be just a little delayed, and would create some interesting effects at certain points in the chain. By varying camera resolutions, camera capture methods, and analog versus digital technologies, we can bounce the same basic signal through all of these different sensor and cable types. The signal would become digital and analog at many different stages depending on the final technologies chosen. The digital form of the signal would have to squeeze and stretch to become analog again. The analog signal would need to be sampled, chopped and encoded into its digital form. Each of these stages would have their own conversions happening between:

- Video standards/Compressions (NTSC, PAL, H.264, DV, etc.)

- Resolutions/Drawing methods (1080p, 480p, 525 TV Lines)

- Voltages

- Refresh Rates

- Scan methods (CMOS, CCD, Vidicon Tube)

- Illumination methods (LED, Fluorescent Backlight, CRT)

- Wire types

- Pixel types

- Physical Transforms. (Passing through glass lenses, screens) etc etc

By adding in broadcast and streaming technologies like Skype, we can extend the feedback loop not only locally within one area, but also globally. One section of the chain can be sent across the globe to another studio running a similar setup with multiple technologies. This can continue being sent around to more and more stations as long as the end is always sent back to the first monitor in the chain.

A digital feedback or video processing step could also be added where several chains of digital feedback occur as well.

If you were able to create a system large enough, there could be so much processing happening for the signal itself to become delayed for a few seconds before it reaches the “original” start location. In this large system, you could wave your hand in between a monitor and camera, and get a warped “response” back from yourself a second or two later.

It’s interesting for me to consider what the signal would be at this point, after going through so many conversions and transforms. Is the signal a discrete moment as it passes from monitor to screen, or does it somehow keep some inherent properties as it fires around the ring?

Suggested Links:

http://softology.com.au/videofeedback/videofeedback.htm